We exploit this insight in defining an optimized system selection model for the studied tasks. Our results suggest that the variant proximity of pre-training data to fine-tuning data is more important than the pre-training data size. We compare our different models to each other, as well as to eight publicly available models by fine-tuning them on five NLP tasks spanning 12 datasets. We also examine the importance of pre-training data size by building additional models that are pre-trained on a scaled-down set of the MSA variant. To do so, we build three pre-trained language models across three variants of Arabic: Modern Standard Arabic (MSA), dialectal Arabic, and classical Arabic, in addition to a fourth language model which is pre-trained on a mix of the three. Publisher = "Association for Computational Linguistics",Ībstract = "In this paper, we explore the effects of language variants, data sizes, and fine-tuning task types in Arabic pre-trained language models. [rabic Pre-trained Language Models",īooktitle = "Proceedings of the Sixth Arabic Natural Language Processing Workshop", > pos = pipeline( 'token-classification', model= 'CAMeL-Lab/bert-base-arabic-camelbert-ca-pos-egy') To use the model with a transformers pipeline: > from transformers import pipeline This model will also be available in CAMeL Tools soon.

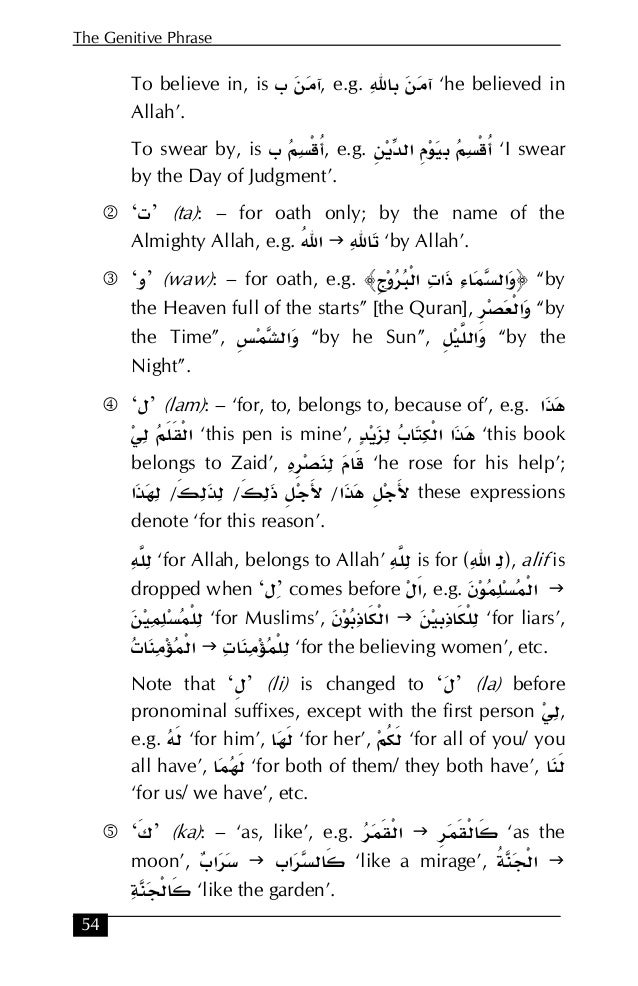

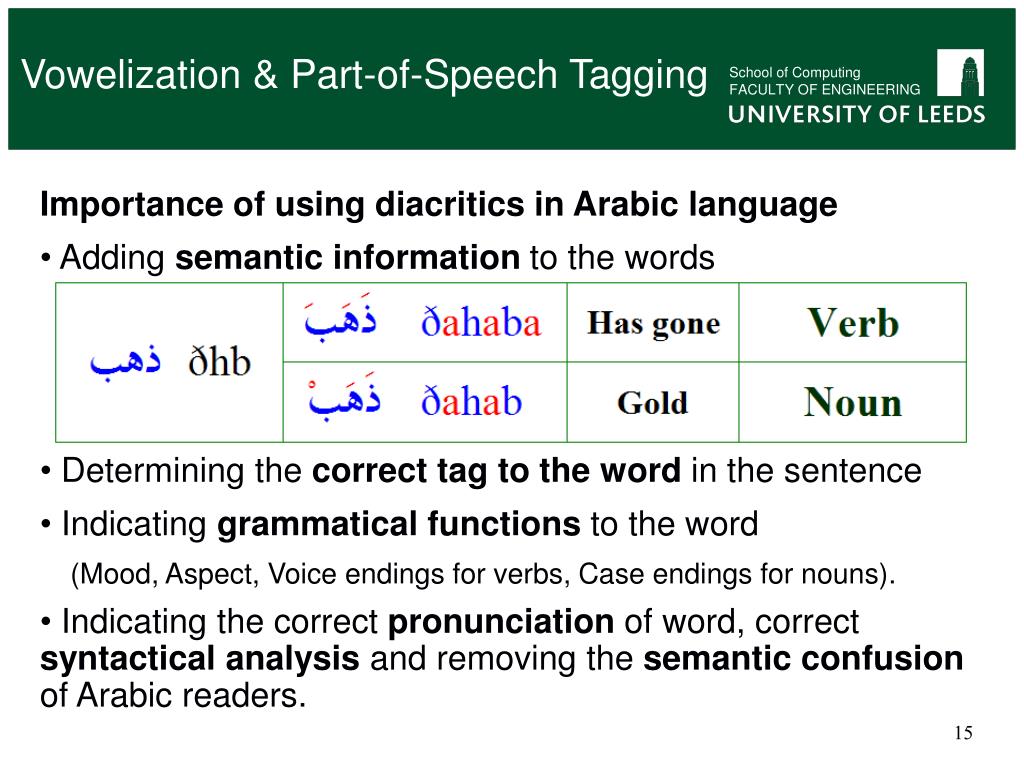

You can use the CAMeLBERT-CA POS-EGY model as part of the transformers pipeline. Our fine-tuning procedure and the hyperparameters we used can be found in our paper " The Interplay of Variant, Size, and Task Type in Arabic Pre-trained Language Models." Our fine-tuning code can be found here. It could be used in spell checking and correcting systems, speech recognition systems, information retrieval systems and text-to-speech synthesis systems.CAMeLBERT-CA POS-EGY Model is a Egyptian Arabic POS tagging model that was built by fine-tuning the CAMeLBERT-CA model.įor the fine-tuning, we used the ARZTB dataset. I am trying to use Stanford POS Tagger in NLTK 3.2.4 on arabic text using Python 3.6, I found a code source but I did not understand most of it because I am totally new to Stanford POS Tagger. Also, it is very important intermediate step to build many natural language processing applications. POS-tagging is usually the first step in linguistic analysis. Thus, the task of POS-tagging is attaching appropriate grammatical or morpho-syntactical category labels to each word, token, symbol, abbreviation and even punctuation mark in a corpus. POS-tagging is considered as a process for automatically assigning the proper grammatical tag to each word of a written text according to its appearance on the text. One of these areas is part-of-speech tagging (POS-tagging). There are many areas that may be considered as properly included within the discipline of computational linguistics. Computational linguistics might be considered as a synonym of automatic processing of natural language, since the main task of computational linguistics is just the construction of computer programs to process words and texts in natural language. It unites two areas that are quite different in appearance, computer science and natural languages. Computational linguistics is a field of artificial intelligence dealing with the logical modeling of natural language from a computational perspective. The study described in this paper belongs to the area of computational linguistics.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed